The Difference Between Claude Opus and Sonnet: Understanding Model Tiers

The Difference Between Claude Opus and Sonnet: Understanding Model Tiers

Reading time: 9 minutes

You've probably noticed that Anthropic doesn't just offer one Claude model—they offer multiple versions with key differences in capability and cost.

Claude Opus 4, Claude Sonnet 4, and the lightweight tier each serve different purposes. GPT comes in GPT-4o and GPT-4o-mini. Gemini has Pro and Flash. Llama comes in 8B, 70B, and 405B sizes.

Why? And more importantly, which one should you actually use?

This guide explains the difference between Claude Opus 4 and Claude Sonnet 4—and how to choose the right model for your specific needs. By the end, you'll know exactly which tier works best for software development, content creation, or any other task.

Why Does Anthropic Offer Multiple Tiers?

Here's the fundamental truth about large language models: you can't have it all.

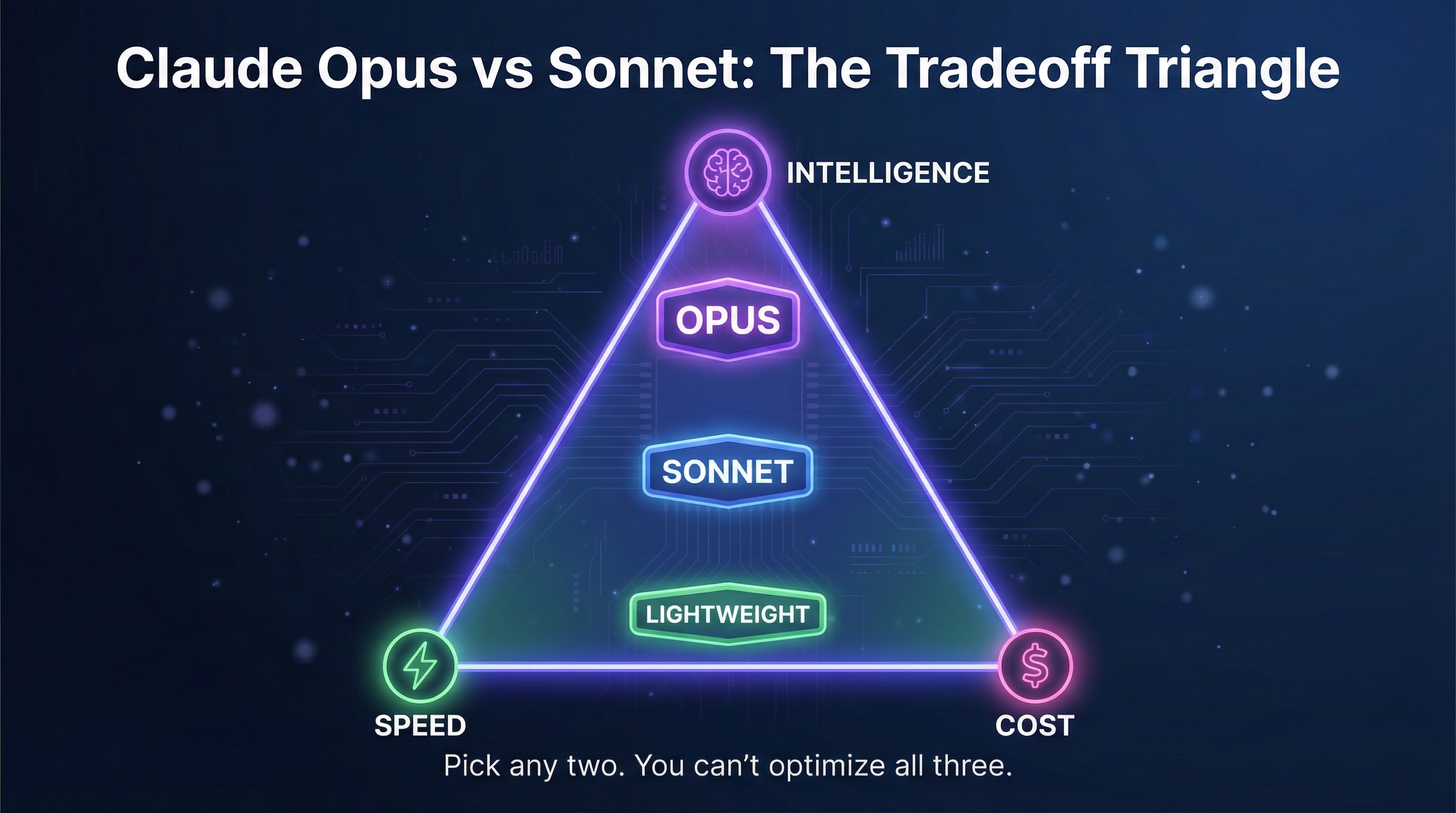

Every LLM involves tradeoffs between three things:

Intelligence — How capable, accurate, and nuanced the responses are

Speed — How fast you get a response

Cost — How much you pay per query

You can optimize for any two of these. But not all three.

Want maximum intelligence AND fast responses? That's expensive.

Want cheap AND smart? It's going to be slow.

Want fast AND cheap? You're sacrificing capability.

This is why Anthropic's Claude offers Opus, Sonnet, and a lightweight option. They're giving you the choice of which tradeoff works for your specific workflow, whether that's coding and complex analysis or scalable content creation.

The Tradeoff Triangle Explained

Think of it as a triangle with three corners:

INTELLIGENCE /\ / \ / \ / OPUS \ /________\ / SONNET \ /____________\ / LIGHTWEIGHT \ /__________________\ SPEED --------------- COST

Top of the triangle (Opus): Maximum intelligence with advanced reasoning capabilities, but slower and more expensive.

Middle of the triangle (Sonnet): Balanced. Strong AI capabilities, reasonable speed, moderate cost.

Bottom of the triangle (Lightweight): Fastest and most cost-effective, but less capable on tasks that require deep reasoning.

Claude Opus 4 vs Claude Sonnet 4: Key Differences

Let's break down Anthropic's Claude lineup and the key differences between tiers.

Claude Opus 4 — The Flagship

Anthropic describes Claude Opus 4 as their most intelligent offering, with state-of-the-art performance on challenging tasks.

Best for: Complex research, nuanced analysis, software engineering tasks, legal review, scientific reasoning, agentic workflows

Where Claude Opus 4 excels:

Advanced reasoning on difficult problems

Best coding model performance on benchmarks like SWE-bench

Natural language understanding at the highest level

Tasks that require multi-step thinking

Working with large codebase analysis

AI agents and agentic applications

When to use Opus:

You need the absolute best quality and accuracy

The task involves complex reasoning, especially in coding and complex problem-solving

You're building AI agents that need reliable decision-making

Mistakes are costly (legal, financial, medical contexts)

When NOT to use Opus:

Simple questions with straightforward answers

High-volume applications where cost matters

Tasks where speed is critical

Anything a simpler tier could handle adequately

Claude Sonnet 4 — The Balanced Choice

Claude Sonnet 4 offers strong performance at a significantly more cost-effective price point. Anthropic claims Sonnet 4 excels at everyday software development while being much faster than Opus.

Best for: Everyday tasks, coding assistance, writing, summarization, content creation, general conversation

Where Sonnet 4 excels:

Strong capability for most software engineering tasks

Good balance of speed and quality for software development

Handles 90% of workflows well

Debug assistance and code review

Natural language processing tasks

Scalable applications

When to use Sonnet:

Most everyday tasks

Coding help, debug sessions, and code review

Writing and editing

Content creation and summarization

Customer-facing applications where you need quality but also speed

When NOT to use Sonnet:

Extremely complex reasoning tasks (use Opus instead)

High-volume simple tasks (use the lightweight tier instead)

The Lightweight Tier — Speed and Scale

Best for: High-volume processing, simple queries, quick lookups, real-time applications

When to use the lightweight option:

Processing thousands of queries

Simple classification or extraction

Real-time chat where latency matters

Cost-sensitive, scalable applications

Initial filtering before sending complex queries to Opus or Sonnet

Version History: Claude 3.5, 4.1, and 4.5

Understanding Anthropic's Claude version numbers helps you choose the right model:

Claude 3 Opus and Claude 3.5 Sonnet — Previous models that established the tier system. Claude 3.5 Sonnet was particularly notable for bridging the gap between cost and capability.

Claude 4 and Claude Sonnet 4 — The current generation with improved AI capabilities across the board.

Version 4.5 — Opus 4.5 vs earlier versions shows continued improvement, especially in advanced reasoning capabilities.

The introduction of Claude 4 brought significant improvements to both tiers, making Sonnet 4 and Claude Opus 4 more powerful AI models than their predecessors.

How Other Providers Structure Their Tiers

The same pattern exists everywhere. Here's how to translate:

OpenAI (GPT)

Claude Equivalent OpenAI Version Notes Opus GPT-4o Full capability flagship Sonnet GPT-4o Same model, no separate "balanced" tier Lightweight GPT-4o-mini Smaller, faster, cheaper

Is GPT-4o-mini good enough?

For many tasks, yes. GPT-4o-mini performs surprisingly well on standard queries and everyday coding. But for tasks that require advanced reasoning, GPT-4o is noticeably better—similar to the Sonnet vs Opus comparison.

Google (Gemini)

Claude Equivalent Gemini Version Notes Opus Gemini Pro / Ultra Maximum capability Sonnet Gemini Pro Balanced option Lightweight Gemini Flash Speed-optimized

Meta (Llama)

Claude Equivalent Llama Version Notes Opus Llama 405B 405 billion parameters Sonnet Llama 70B 70 billion parameters Lightweight Llama 8B 8 billion parameters

Real Cost Differences

The pricing gap between tiers is significant. Selecting the right tier can mean 50-100x cost difference.

Per 1 million tokens (approximate):

Tier Level Claude OpenAI Cost Difference Flagship $15/$75 (Opus) $2.50/$10 (GPT-4o) Baseline Balanced $3/$15 (Sonnet) $2.50/$10 (GPT-4o) 3-5x cheaper Lightweight $0.25/$1.25 $0.15/$0.60 (mini) 50-100x cheaper

First number is input, second is output.

The cost-effectiveness of using Sonnet for everyday tasks vs Opus for everything could be the difference between a $10,000 monthly bill and a $500 one.

The Biggest Mistake: Using the Wrong Tier

Most people make one of two mistakes:

Mistake 1: Using Opus for Everything

"I want the best AI, so I'll always use Opus."

This sounds logical but it's wasteful. If you're using Claude Opus 4 to answer "What's the capital of France?" you're paying 50x more than necessary for no benefit. Simple questions don't benefit from Opus-level advanced reasoning.

Mistake 2: Using Lightweight for Complex Tasks

This backfires when you hit tasks that require real capability. Using the lightweight tier for software engineering tasks or intricate coding problems gives worse results—and you waste more time fixing the output than you saved.

How to Choose the Right Model: Opus vs Sonnet

Here's a framework for selecting the right tier:

Step 1: Start with Sonnet

Claude Sonnet 4 handles most workflows well. Use it as your default for software development, content creation, and general tasks.

Step 2: Upgrade to Opus ONLY when:

You need maximum accuracy, especially in complex reasoning

The task involves nuanced judgment calls

You're getting inadequate results from Sonnet

You're building AI agents that need reliable agentic decision-making

The cost of errors exceeds the cost of Opus

Step 3: Downgrade to lightweight when:

The task is simple and straightforward

You need scalable, high-volume processing

Speed matters more than maximum quality

Step 4: Test on real-world tasks

The best way to know which model is better for your specific needs? Test the same prompt across tiers and compare quality, speed, and cost.

Tier Selection by Task Type

Here's a quick reference for selecting the right tier:

Task Type Recommended Why Complex coding Opus Best coding model for difficult problems Everyday coding Sonnet Good enough for most debug and review Large codebase analysis Opus Needs advanced reasoning Software engineering tasks Sonnet or Opus Depends on complexity Content creation Sonnet Good balance of quality and speed AI agents / agentic workflows Opus Needs reliable decision-making Natural language processing Sonnet Strong NLP at reasonable cost Research and analysis Opus Maximum capability needed Scalable chatbot Lightweight Cost-effective at volume Data extraction Lightweight Usually straightforward

Advanced Strategy: Two Tools, One Workflow

Sophisticated users don't pick one tier—they use two models together, routing different queries to different tiers automatically.

How this workflow works:

A classifier evaluates incoming queries

Simple queries go to the lightweight tier

Complex queries go to Opus

Everything else goes to Sonnet

This approach is significantly more cost-effective than using Opus for everything while maintaining quality where it matters.

Performance on SWE-Bench and Other Benchmarks

When comparing Claude Opus 4 and Claude Sonnet 4, benchmarks like SWE-bench (software engineering) show clear differences:

Claude Opus 4 achieves state-of-the-art scores on difficult coding tasks

Claude Sonnet 4 performs well on standard software development but trails Opus on the hardest problems

However, real-world performance on YOUR tasks matters more than benchmark scores. The best coding model for your codebase depends on your specific needs.

Key Takeaways

The tradeoff is real: You can't have maximum intelligence, speed, AND low cost simultaneously.

Claude Opus 4 is the best AI for tasks that require advanced reasoning, AI agents, and complex software engineering.

Claude Sonnet 4 handles 80-90% of workflows including everyday coding, content creation, and natural language tasks.

Know the key differences: Opus excels at complex reasoning; Sonnet excels at balanced, cost-effective performance.

Choose the right model for the task: Don't default to Opus for everything or lightweight for complex tasks.

The cost difference is massive: Selecting the right tier can save 50-100x on costs.

What's Next

Now that you understand Claude Opus 4 and Claude Sonnet 4, the next question is: what about specialized "reasoning" models like OpenAI's o1 and o3? How are they different?

In Part 3, we'll explore reasoning models—what they are, how they work, and when the extra "thinking" time is worth it.

Coming up in this series:

Part 3: What Is a Reasoning Model? (o1, o3, and Chain of Thought)

Part 4: What Is a Context Window?

Part 5: Can ChatGPT See Images? (Multimodal Capabilities)

Part 6: Can I Run LLMs on My Own Computer? (Open vs. Closed)

Part 7: How Much Does the ChatGPT API Cost?

Part 8: Which LLM Should I Use? (Decision Framework)

Your Turn

Have you tested Claude Opus 4 and Claude Sonnet 4 on your own tasks? Which model is better for your specific workflow?

Let me know in the comments how you're selecting the right tier for your software development or content creation work.

Found this helpful? Share it with someone trying to choose the right model. And follow along for the rest of this series.