What Is a Reasoning Model? Understanding O1, Chain of Thought, and Extended Thinking

What Is a Reasoning Model? Understanding Chain-of-Thought, O1, and Extended Thinking

Reading time: 10 minutes

You've probably heard about OpenAI's new releases or seen mentions of "chain-of-thought" and "extended thinking" in recent AI research discussions.

But what exactly is a reasoning model? How do reasoning models work differently from traditional AI models? And when should you actually use one?

This guide explains how these specialized LLMs approach problems, when reasoning models excel compared to standard options, and when they're overkill. By the end, you'll know exactly when the extra time and cost is worth it.

What Is a Reasoning Model?

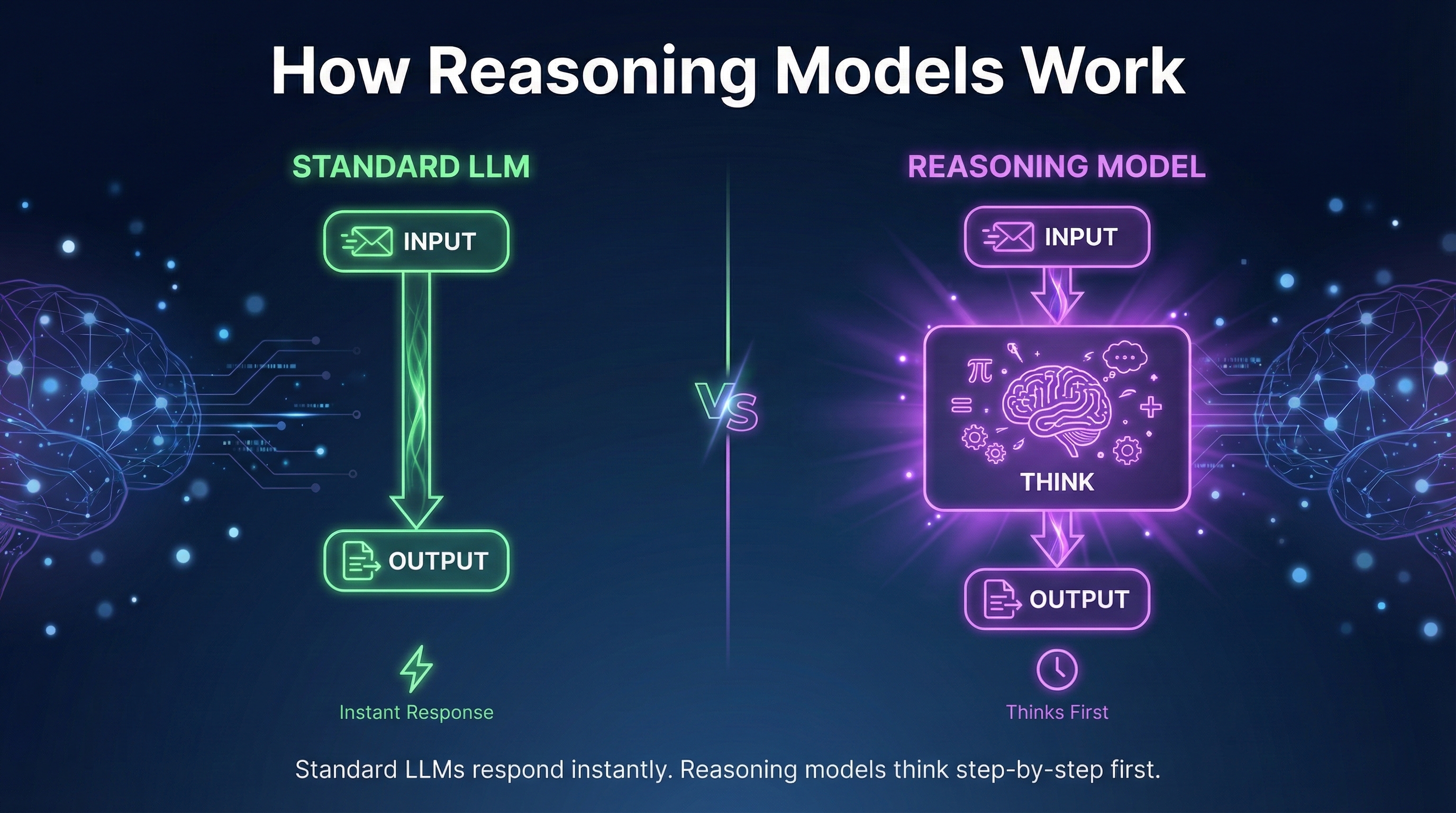

A reasoning model is a type of large language model specifically designed to engage in multi-step reasoning before producing output.

Traditional AI models generate responses immediately—they read your input and start producing text right away. They're fast, but they don't pause to plan or verify their logic.

Reasoning models add an explicit thinking phase. Before the model generates the final answer, it works through the problem methodically, considering different approaches and checking its logical reasoning.

Think of it like this:

Standard LLM: Student who immediately starts writing an essay without an outline.

Reasoning model: Student who outlines, drafts, reviews, then writes the final version.

This longer reasoning takes more time, but for tasks requiring mathematical reasoning or logical reasoning, the results are significantly better.

How Does Chain-of-Thought Reasoning Work?

Chain-of-thought (often written as chain-of-thought or CoT) is the technique that makes reasoning models work.

Instead of jumping straight to an answer, the model generates intermediate steps that lead to the final output. This thought process uses reasoning tokens—tokens dedicated to working through the problem rather than producing the final answer.

Example without CoT reasoning:

Input: If I have 3 boxes with 4 apples each, and I give away 5 apples, how many do I have? Output: 7 apples.

Example with chain-of-thought reasoning:

Input: If I have 3 boxes with 4 apples each, and I give away 5 apples, how many do I have? Reasoning:

- First, calculate total apples: 3 boxes × 4 apples = 12 apples

- Then subtract apples given away: 12 - 5 = 7 apples

- Verify: 3×4=12, 12-5=7 ✓ Output: 7 apples.

This step-by-step reasoning approach dramatically improves accuracy on tasks requiring careful analysis.

Major Options Available Today

Several frontier AI labs now offer dedicated reasoning abilities:

OpenAI Models

OpenAI released their first dedicated option for complex reasoning tasks. It uses chain-of-thought internally, spending more compute time "thinking" before responding.

Key characteristics:

Extended thinking phase before the model generates output

Significantly better at math, coding, and logic

Slower than GPT-4 (seconds to minutes vs. instant)

More expensive per query

Reasoning traces are partially hidden from users

Claude Extended Thinking

Anthropic's approach allows Claude to engage in longer reasoning for difficult problems. When enabled, it works through tasks methodically before providing the final answer.

DeepSeek-R1 Model

The DeepSeek-R1 model is an open source option that achieves competitive performance. It's notable because:

Fully open models (you can run it locally)

Transparent reasoning traces you can inspect

Trained using reinforcement learning

Strong performance on benchmarks

Demonstrates that smaller models can develop strong reasoning abilities

DeepSeek-R1 and similar open models show that these capabilities aren't limited to closed frontier AI systems.

Llama Models and Open Alternatives

Meta's llama models and other open source options are increasingly incorporating reasoning strategies. While the base model versions focus on general capabilities, fine-tuned variants target specific reasoning tasks.

How Reasoning Models Work: The Technical View

Understanding how reasoning models work helps you use them effectively.

Foundation models are trained primarily on next-token prediction—the model learns to produce text that follows naturally from the input.

Reasoning-focused systems add additional training:

Reinforcement learning The model learns which reasoning strategies lead to correct answers through feedback signals. AI researchers have found that reasoning models trained this way show emergent reasoning abilities.

Process reward models Instead of just rewarding correct final answers, these reward good reasoning—encouraging sound logic even if the conclusion has minor errors.

Chain-of-thought fine-tuning Training on examples that include explicit reasoning teaches the model to approach problems systematically. The model learns to break down problems before solving them.

Through this training, the model learns different reasoning approaches and develops stronger reasoning abilities than traditional AI models.

When Do Reasoning Models Excel?

These systems excel at specific types of tasks. Here's when the extra effort is worth it:

Use Reasoning-Focused Models For:

Mathematical reasoning Multi-step calculations, word problems, proofs. Step-by-step reasoning catches errors that standard LLMs miss.

Logical reasoning Problems with constraints, deductive reasoning, or tricky logic benefit enormously from explicit reasoning chains.

Difficult coding challenges Algorithm design, debugging complex problems. AI research shows major improvements on coding benchmarks with these approaches.

Scientific reasoning Problems requiring hypothesis testing, analysis of evidence, or technical analysis.

Multi-step reasoning tasks Tasks where the model needs to consider consequences, evaluate multiple approaches, and plan before acting. Essential for agentic AI applications.

Legal and financial analysis Nuanced reasoning about regulations, contracts, or financial scenarios where careful analysis matters.

When Standard LLMs Are Better:

Simple questions Basic queries don't need extended thinking. You're paying for compute you don't need.

Creative writing Brainstorming and storytelling don't benefit from longer reasoning. Standard generative AI applications are often better—reasoning-focused systems can actually "overthink" creative tasks.

Quick conversations Casual chat and simple Q&A where speed matters more than deep analysis.

Summarization and translation These tasks don't require the reasoning abilities that justify the extra cost.

The Cost: Time and Compute

These specialized systems require significantly more compute than standard options. The reasoning—all those intermediate steps—takes time and processing power.

What this means in practice:

Latency: An input that gets instant response from GPT-4 might take 30 seconds to 2 minutes

Cost: Pricing is typically 3-10x higher per query

Token usage: Reasoning tokens add up, even if you don't see them all in the model outputs

For high-volume applications, this matters. Running thousands of queries through a reasoning-focused system when a standard LLM would suffice wastes significant resources.

Practical Example: Coding Task Comparison

Let's see how a standard LLM vs a reasoning-focused approach handles a complex problem:

Input:

Write a function that finds the longest palindromic substring. Optimize for time complexity.

Standard LLM approach: The model generates code immediately. Might produce a working solution, but models may miss edge cases or use a suboptimal algorithm because they didn't plan first.

Reasoning-focused approach:

Reasoning:

1. What approaches exist for this problem? - Brute force: O(n³) - check all substrings - Dynamic programming: O(n²) time, O(n²) space - Expand around center: O(n²) time, O(1) space - Manacher's algorithm: O(n) time, O(n) space 2. For optimization, Manacher's is best but complex. Expand around center balances efficiency and clarity. 3. Edge cases to handle: - Empty string - Single character - All same characters 4. Implementing expand around center...

Then the model generates the code with proper optimization and edge case handling. The reasoning effort pays off in better results.

Encouraging Better Reasoning in Any LLM

Even with standard language models, you can encourage better reasoning through your input:

Basic input:

What is 47 × 89?

Input encouraging reasoning:

What is 47 × 89? Work through this step-by-step and show your work.

Adding reasoning encouragement to your input can improve quality even with standard LLMs. However, dedicated systems build this into their core approach automatically and use specialized reasoning strategies that produce better results.

Agentic AI and Reasoning

Reasoning abilities become especially important for agentic AI—systems that take actions, use tools, and work through tasks autonomously.

An agentic workflow might involve:

Understanding the goal

Planning a sequence of actions

Executing each step

Evaluating results

Adjusting the approach if needed

Standard LLMs can struggle with this because models don't naturally pause to plan. Systems with stronger reasoning abilities are better suited for agent applications where thinking before acting prevents costly mistakes.

Research has found that reasoning models significantly outperform traditional AI models on agentic benchmarks.

The Rise of Reasoning in AI Research

AI researchers have increasingly focused on the development of reasoning capabilities. Key findings:

Reasoning models are specifically trained to handle multi-step reasoning

Longer reasoning sequences generally improve accuracy on hard problems

Smaller models with focused reasoning training can outperform larger base model versions

Human reasoning patterns can inform training approaches

The best reasoning results come from combining multiple reasoning methods

This AI research continues to push the boundaries of what's possible with reasoning-focused systems.

Should You Use a Reasoning-Focused Approach?

Here's a practical decision framework:

Use reasoning-focused systems when:

The task involves mathematical reasoning or logical reasoning

Accuracy matters more than speed

You're working on coding challenges or algorithm design

The problem requires multi-step reasoning

You're building agentic AI that needs reliable decision-making

Use standard LLMs when:

Speed matters

The task is straightforward

You're doing creative writing or brainstorming

Cost is a primary concern

You're processing high volume queries

The hybrid approach: Many applications use standard systems for most queries and route complex requests to reasoning-focused options. This balances cost, speed, and accuracy.

Key Takeaways

Reasoning models think before answering — They add an explicit thinking phase before the model generates the final output.

Chain-of-thought reasoning is the core technique — The system works through intermediate reasoning before the conclusion.

Major options include: OpenAI's offerings, Claude Extended Thinking, DeepSeek-R1 model, and open models with reasoning training.

Reasoning models excel at complex tasks — Mathematical reasoning, logical reasoning, difficult coding, and multi-step reasoning tasks.

Traditional AI models are better for simple tasks — Creative writing, quick Q&A, summarization don't benefit from extended reasoning.

The tradeoff is time and compute — These systems are slower and more expensive, but significantly more accurate on hard problems.

Open source options exist — DeepSeek-R1 and llama models show that reasoning abilities aren't limited to closed systems.

What's Next

Now that you understand reasoning approaches, the next question is: how much information can these LLMs actually process at once?

In Part 4, we'll explore context windows—what they are, why they matter, and how to work effectively when you have lots of information to process.

Coming up in this series:

Part 4: What Is a Context Window?

Part 5: Can ChatGPT See Images? (Multimodal Capabilities)

Part 6: Can I Run LLMs on My Own Computer? (Open vs. Closed)

Part 7: How Much Does the ChatGPT API Cost?

Part 8: Which LLM Should I Use? (Decision Framework)

Your Turn

Have you tried reasoning-focused approaches? How did the output compare to standard options?

What reasoning tasks have you found where the extra thinking time was worth it? Share your experience in the comments.

Found this helpful? Share it with someone trying to understand these concepts. And follow along for the rest of this series.